You have got the best job of the 21st century. Like a treasure hunter, looking for a data treasure chest while sailing through data lakes. In many companies you are a digital maker – armed with skills that turn you to a modern-day polymath and a toolset which is so holistic and complex at once, even astronauts feel dizzy.

However, there is still something you carry every day to work: the burden of high expectations and demands of others – whether it is a customer, your supervisor or colleagues. Being a data scientist is a dream job, but also very stressful as it requires creative approaches and new solutions every day.

However, there is still something you carry every day to work: the burden of high expectations and demands of others – whether it is a customer, your supervisor or colleagues. Being a data scientist is a dream job, but also very stressful as it requires creative approaches and new solutions every day.

Would it not be great if there is something that makes your daily work easier?

Many requirements and your need for a solution

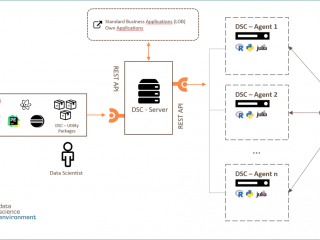

R, Python and Julia – does your department work with several programming languages? Would it not be great to have a solution that supports various languages and thus encourage what is so essential for data analysis: teamwork. Additionally, connectivity packages could enable you to work in a familiar surrounding such as RStudio.

Performance is everything: you create the most complex neuronal networks and process data quantities that make big data really deserve the word “big”. Imagine, you could transfer your analyses in a controlled and scalable environment where your scripts perform not only reliable, but also an optimal load distribution. All this, including a horizontal scale and improvement of system performance.

Data sources, user and analysis scripts – in search of a tool that can bring together all components in a bundled analysis project to manage ressources more effeciently, raise transparency and develop a compliant workflow. The best possible solution is a role management which can be easily expanded to the specialist department.

Time is money. Of course, that also applies to your working time. A solution that can free you from time-consuming routine tasks, such as monitoring and parameterization of script execution, as well as for implementing analyses via temporal trigger. Additionally, the dynamic load distribution and the logging of script output ensure the operationalization of script execution in a business-critical environment.

Keep an eye on the big picture: your performant analysis will not bring you the deserved satisfaction if you are not able to embed it into the existing IT-landscape. A tool that has consistent Interfaces to integrate your analysis scripts via REST-API neatly in any existing application would be perfect to ease your daily workload.

eoda | data science core: a solution from data scientists to data scientists

Imagine a data science tool that incorporates the experience to leverage your potential in bringing data science to the enterprise environment.

Based on many years of experience from analysis projects and the knowledge about your daily challenges, we have developed a solution for you: the eoda | data science core. You can manage your analysis projects flexibly, performant and secure. It gives you the space you need to deal with expectations and keep the love for the profession– as it is, after all, the best job in the world.

The eoda | data science core is the first main component of the eoda | data science environment. This will be complemented with the second component, the eoda | data science portal. How does the portal enable collaborative working, explorative analyses and a user-friendly visualization of results? Read all about it in the next article and find out.

This way.